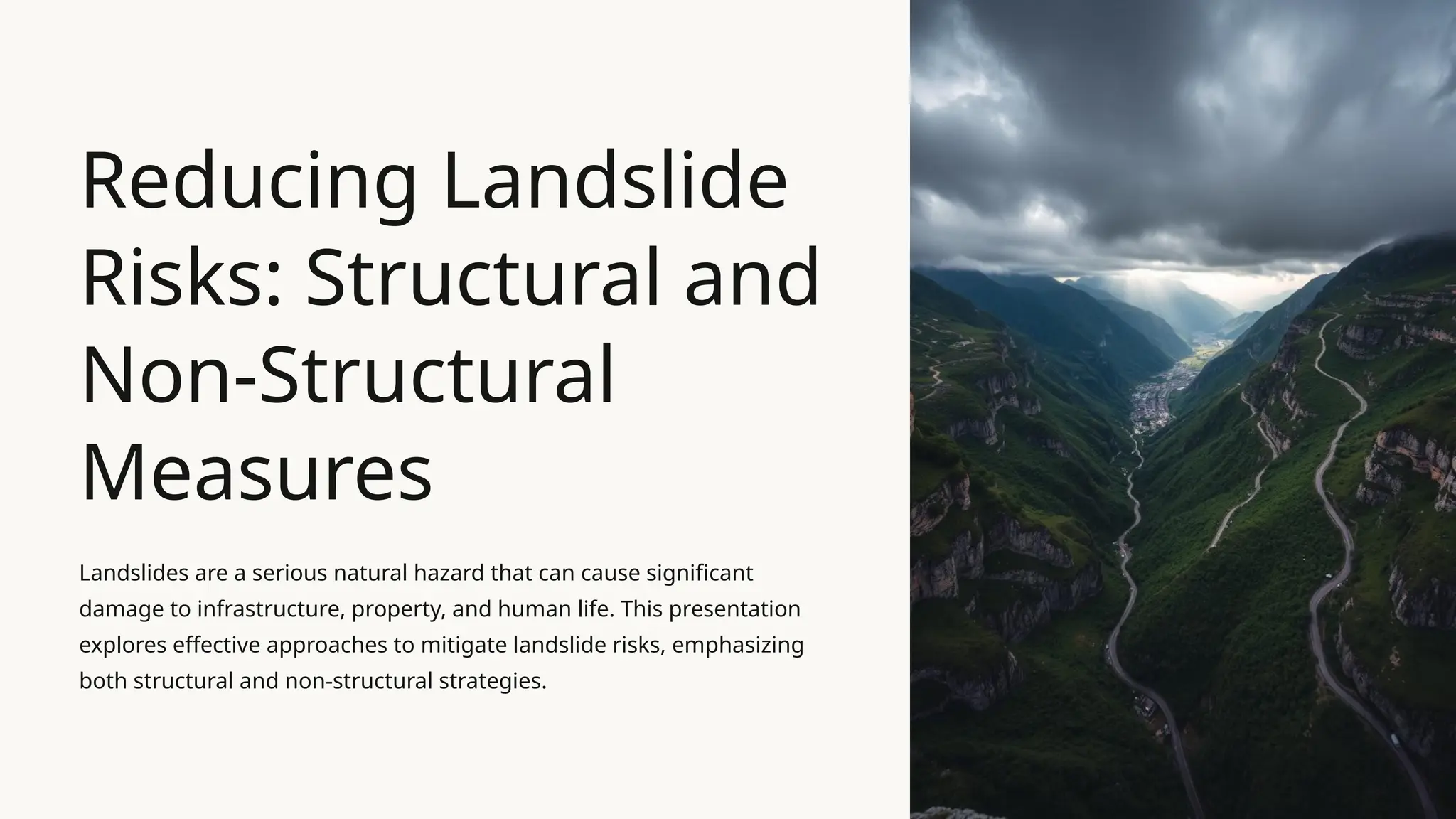

The recent sit-down between the White House and Anthropic CEO Dario Amodei was not the routine photo-op the press briefing suggested. While official channels framed the meeting as a proactive step toward safe innovation, sources close to the administration describe a much darker underlying motive. The federal government is effectively terrified of Mythos AI. This isn’t a fear of robots taking over the world, but a very specific, technical panic regarding the erosion of information integrity and the potential for large-scale biological or cyber warfare orchestrated by autonomous systems that no longer require human oversight.

Anthropic is a company founded by refugees from OpenAI who felt the path toward "Artificial General Intelligence" was becoming reckless. By bringing Amodei to the table, the executive branch is signaling that it no longer trusts the market to self-regulate. The "Constitutional AI" approach pioneered by Anthropic—where a model is governed by a set of written rules rather than just human feedback—is being viewed as a potential blueprint for federal regulation.

The Mythos Shadow

To understand why the White House is scrambling, you have to look at the "Mythos" problem. In the industry, Mythos refers to the point where an AI model begins to hallucinate with such conviction that it overrides factual databases, creating a self-sustaining loop of misinformation. If a model like Mythos AI achieves enough scale, it doesn't just provide wrong answers; it pollutes the entire data pool for every other AI. It is a digital contagion.

The administration’s concern is that we are months, not years, away from a scenario where the open internet becomes a feedback loop of AI-generated junk. This makes it impossible for government agencies to verify facts during a national crisis. When the President’s advisors talk about "working together" with Anthropic, they are really talking about building a firewall against the collapse of digital reality.

The Price of Safety

Washington usually moves at a glacial pace, but the speed of this outreach suggests the internal data is alarming. The Department of Commerce is reportedly looking into "compute caps"—limits on how much raw processing power a company can use to train a single model. This is a radical departure from the hands-off approach that defined the early days of the internet.

Amodei finds himself in a complicated position. He is a true believer in safety, yet he leads a for-profit entity that needs massive investment to survive. If he agrees to strict government oversight, he risks falling behind competitors who might choose to operate in jurisdictions with fewer rules. It is a high-stakes trade-off. He gets a seat at the most powerful table in the world, but he might have to hand over the keys to his company’s intellectual property to get it.

Beyond the Public Narrative

The public discourse focuses on job losses and bias. Those are valid concerns, but they are distractions from the structural risks discussed behind closed doors. The real conversation is about "dual-use" capabilities. A model that can write a perfect legal brief can also be used to identify vulnerabilities in the national power grid or synthesize a new strain of a pathogen.

The White House wants a "kill switch" for the most advanced models. They are asking for a level of transparency that most Silicon Valley firms find offensive. Anthropic’s willingness to even engage in these talks suggests they see the writing on the wall. They would rather help write the regulations than be crushed by them later.

The Problem With Constitutional AI

While the "Constitutional" approach sounds great on paper, it has a glaring flaw. Who writes the constitution? If the government dictates the values that an AI must follow, we move from a world of technological risk to a world of state-sponsored thought control. If the AI is programmed to avoid certain "dangerous" topics, it can easily be tuned to suppress political dissent or inconvenient truths.

This is the tension that hung over the meeting. The administration wants a tool that is safe, but "safety" is a subjective term in politics. For some, safety means preventing a cyberattack. For others, it means ensuring the AI doesn't say things that contradict the current administration's platform.

Technical Guardrails or Political Chains

The mechanism being discussed involves "red-teaming" at a scale never seen before. This involves hiring thousands of experts to try and break the AI, forcing it to reveal its hidden biases or dangerous capabilities before it is released to the public. The cost of this process is astronomical. By mandating this level of testing, the government is effectively ensuring that only the largest, most well-funded companies can compete.

Small startups and open-source developers are being squeezed out of the conversation. If you can’t afford a $50 million safety audit, you can’t play. This creates a cozy duopoly where the government and a few "vetted" companies control the most powerful technology in human history. It is a regulatory capture disguised as a public safety initiative.

The Inevitability of the Leak

History shows us that these models cannot be kept in a box forever. Even the most secure systems at the NSA and CIA have suffered leaks. If Anthropic builds the most "safe" and "governed" AI in the world, the underlying weights of that model are still just code. Once that code is out, the guardrails disappear.

The Mythos AI fear is rooted in the idea that someone, somewhere, will release an unaligned model with no "constitution" and no ethical brakes. When that happens, all the meetings in the West Wing won't matter. The White House is trying to win a race where the finish line keeps moving. They are trying to regulate a liquid.

The Shift to Hardware Regulation

Because software is so hard to track, the real strategy is shifting toward the physical world. The government is looking at the supply chain of high-end GPUs—the chips that power these systems. If they can track every single chip, they can track who is building the next Mythos. This is a massive expansion of the surveillance state into the realm of high-tech manufacturing.

It requires a level of international cooperation that currently doesn't exist. China is not going to agree to US-led chip tracking. This means we are headed toward a fractured digital world, where different regions have different "realities" powered by different AI models.

Why Anthropic is the Chosen One

Anthropic’s "Claude" model is often cited as the most "helpful and harmless" AI on the market. It is less likely to give you a recipe for a bomb or use a racial slur than its competitors. This makes it the perfect partner for a risk-averse government. But "harmless" can also mean "useless" if the filters are too tight.

If the government forces Anthropic to be the standard-bearer, we might end up with a primary AI that is so neutered it cannot solve the complex problems we actually need it for. We are trading utility for a sense of security that might be entirely illusory.

The Financial Incentives of Fear

There is a cynical take that shouldn't be ignored. Fear sells. For a company like Anthropic, highlighting the "dangers" of AI is a great way to justify their specific business model. It creates a barrier to entry for anyone who doesn't have their specific "safety" tech. For the White House, the "Mythos" threat provides a convenient excuse to exert more control over a sector of the economy that has remained stubbornly independent.

Neither side is an unbiased actor. They both have a vested interest in making the public believe that the technology is more dangerous—and more advanced—than it actually is. It is a classic power play. By the time the public realizes the stakes, the rules will already be written.

The Technical Deadlock

We are currently in a period of "capability overhang," where the models have abilities that even their creators don't fully understand. During the meeting, the discussion reportedly touched on the "black box" problem. If we don't know how the AI is making a decision, we can't truly say it is safe.

Current research into "mechanistic interpretability"—trying to map the neurons of an AI like a human brain—is still in its infancy. We are trying to regulate a technology while we are still figuring out the basic physics of how it works. It is like trying to write aviation laws before we understand lift.

The Immediate Practical Reality

Expect an executive order within the next quarter that formalizes these "partnerships." It will likely require AI companies to report to the government whenever they start a training run that exceeds a certain amount of "compute." It will also likely mandate that these companies give the government early access to their models for "safety testing."

This isn't a suggestion. It is a mandate in all but name. Companies that don't comply will find their federal contracts disappearing and their regulatory environment becoming much more hostile.

The era of the "move fast and break things" in AI is over. The government has moved in, and they aren't leaving. Whether this actually makes the world safer from the Mythos AI threat, or just gives the state a more powerful tool for control, remains the most important question of our time. The move toward "Constitutional AI" is the first step toward a digital world where every answer is filtered through a pre-approved lens. Stop looking at the press releases and start looking at the hardware. Control the chips, and you control the truth.